Modern software has grown significantly in complexity and scale, making it more difficult for development teams to maintain large, distributed codebases. This growth increases the likelihood of well-known software challenges such as faster error propagation, increased technical debt and difficulty keeping up with rapid development cycles. In this context, Artificial Intelligence (AI) is starting to shift the way software development is performed from requirements gathering and code generation to intelligent debugging and documentation support. However, most of these tools are isolated and are not able to take into account the full project context. Software teams need to go a step further by adopting context-aware AI systems that understand the broader project, adapt to specific requirements, and support decision-making throughout the entire development cycle.

The AI4SWeng Development Lifecycle Approach

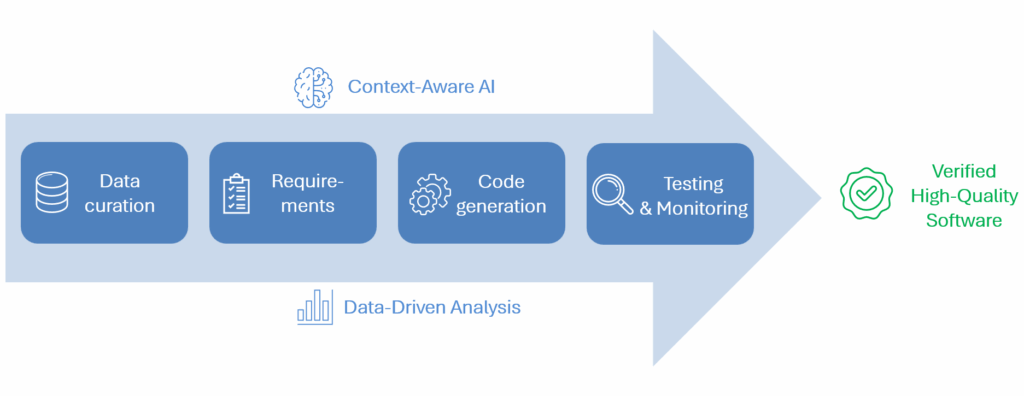

AI4SWEng combines large language models (LLMs) with data-driven analysis throughout the software development cycle. The LLMs adapt to the codebase and requirements of each project, allowing them to understand natural language inputs, make architectural decisions, and generate verified and tested code. The AI4SWEng architecture is designed to work with huge volumes of data and code, maintaining accuracy even as the complexity of the project increases. It also analyses the generated software artefacts, including requirements documents and test results, to detect possible errors or inconsistencies and suggest improvements. The system benefits from gathering information throughout the entire pipeline, increasing the knowledge available to the LLMs thus allowing continuous refinement of the results over time.

From Natural Language Input to Verified Code

The AI4SWEng Work Package 3 addresses the full lifecycle of software development from informal functionality descriptions to verified code. WP3 starts by extracting, cleaning and curating the project data before it is used by the LLMs. This data includes source code, requirements, documentation and test results. This ensures that the LLMs operate on high-quality data rather than relying only on generic patterns. From there, it translates natural language into formal requirements, designs architectural components, and generates code aligned with the project’s design constraints. To manage the large volumes of data present in real-world projects, WP3 combines fine-tuned LLMs with Retrieval-Augmented Generation (RAG), allowing it to reason over both documentation and the codebase, going beyond the context window limitation of LLMs.

With code generation complete, WP3 shifts its focus to validation and quality assurance. This stage analyses both generated and existing code to detect inconsistencies, defects, and licensing issues. The Testing Automation Tool (TAT) enables the automation of unit and integration tests, using fine-tuned LLMs to detect improve test coverage. This is complemented by a performance monitoring tool that collects data at runtime and establishes feedback loops for continuous model refinement. All the WP3 tools are designed to work together, sharing the data across the different stages, enabling a more complete understanding of the project.

Benefits of the AI4SWEng Approach

Automating repetitive and error-prone tasks gives development teams more time to focus on higher-level tasks that require human supervision, improving productivity. When AI tools handle formal requirements processing, code analysis, and test generation, the probability of error propagation throughout the pipeline decreases. Early detection of these issues reduces the cost and time associated with later development stages, a well-known source of inefficiency in large software projects. Altogether, these capabilities shift software development from a process dependent on individual vigilance to a systematic, AI-informed workflow that ensures quality and lets developers focus where they add the most value.